The Great Slow Down: Why Science is Sputtering and How Web3 Can Help

Decelerating scientific progress. The problem with academic journals. Ideas for reforming the system from inside and revolutionary ideas for remaking the world of research.

There’s a quote from Douglas Adams that I have been thinking about a lot recently:

“I've come up with a set of rules that describe our reactions to technology:

1. Anything that is in the world when you're born is normal and ordinary and is just a natural part of the way the world works.

2. Anything that's invented between when you're 15 and 35 is new and exciting and revolutionary and you can probably get a career in it.

3. Anything invented after you're 35 is against the natural order of things.”

But even among the under-35 set, there’s a lot of anxiety these days about our rate of progress. There’s a sense that our species is like Icarus and the wax on our wings is starting to melt…

It’s not just the Luddites saying this.

Elon Musk says that we are summoning the demon by building AGI. Cryptocurrency excites and terrifies people aiming at functioning institutions. 3D printing is already creating ghost guns. Genetic editing promises to shift our definition of "human."

But despite these fears, our actual technical and scientific progress is slowing down.

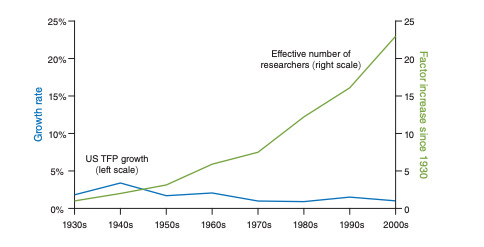

We are putting more effort into science than ever before. The number of scientists is growing at a rate of 4% per year. The number of scientific citations is growing at 8% per year (Cowan).

But even as we invest more, we are getting less impactful results.

Total Factor Productivity is a measure of tech's impact on growth. It's growth rate has slowed from 3% per year in the 1940s to under 1% today. (Cowen)

It takes 18x as many researchers to double transistors on a microprocessor as it did in the 1970s. (Bloom et al)

New ideas are taking far longer to become cited by other researchers no matter how influential they eventually prove to be . (Chu, Evans)

Researchers are 40x less efficient in generating breakthroughs compared with their 1940s counterparts. (Bloom)

So maybe we’re not heading toward a technical singularity that dooms the species. Yay?

But this data is troubling, too. Our world has big problems that necessitate scientific breakthroughs.

So what exactly is going on? Well, there are two competing stories.

The first is that we have simply solved all the easy problems. We figured out electricity and thermodynamics. We cracked the secrets of the atom and the quantum. We have reached the point of diminishing marginal scientific returns.

But that story isn’t super convincing.

Yes, we made massive strides over the last 100 years. But science keeps offering new frontiers. We are only beginning to understand complexity and emergence. We have very little understanding of life, biology and consciousness. Our work in quantum physics seems to be opening more questions than it resolves. Our research into computer science and artificial intelligence is still nascent.

Scientific progress may have an eventual asymptote, but we’re nowhere near it.

So then there’s a second story.

This one says that we’re in a moment of profound institutional failure. It argues that the way that we produce science is broken.

We incentivize the wrong things. We fund the wrong projects. We share data too slowly. We are too cautious in revising our prior assumptions.

That’s the story we’re going to dive into today.

The Two Pillars of the Research System: Money and Status

Incentives are destiny. If you want to look at where a system has gone wrong, you need to follow the incentives.

Grants and Journals: the Twin Pillars of Academic Calcification

In the 17th Century, scientists shared progress by writing each other letters. This worked well enough for the well-connected. But other intellectuals -- or *gasp* commoners -- interested in ideas were SOL (shit outta luck). They had to wait years for the printing of long, expensive summary books.

This was – as you might have guessed – a slow way to spread insights.

In 1660, a handful of these epistolary scientists started their own nerdy drinking club. They would meet once a week in London to share their progress. Soon, the King of England -- a thinker and a drinker, himself -- granted them a royal charter. The Royal Society of London was born.

By 1665, they started publishing a journal of the club’s meetings. Each issue documented key ideas and experiments for members who had not been able to attend. This would become the first scientific journal. The Philosophical Transactions of the Royal Society was born. It remains the oldest continuously published scientific journal in the world.

It’s hard to overstate how important this breakthrough was. Rather than years, new ideas were being spread monthly. Soon other academic groups in the US, France and the UK, were publishing journals of their own. The academic journal was born.

The Royal Society and its ilk consisted primarily of wealthy idle gentlemen. Endowed by university or by birth right, the Citizen Scientist had little need for money. Research was a hobby, not a career.

But the 19th Century's quickening pace of innovation attracted national governments. They brought resources -- and conditions -- to the world of science.

This started under Lincoln. In 1862, his administration created the National Academy of Science to support the Union in the Civil War. It also signed the Morrill Act that created public universities. For the first time, the US Government was in the business of guiding research.

As America's challenges grew, so did its funding of science. The Manhattan Project gave way to the Cold War. To keep pace with Soviet advances, the US government committed to fund basic scientific research. It was a good bargain for academics. In exchange for working in the public interest, these universities received massive funding. In 2018, for example, 58% of funding for basic research came from federal or state governments.

But the government’s involvement introduced distortions to the scientific enterprise. In a time of constricting budgets, the government needed a tangible way to prove it was investing wisely. Grant-funding committees sought a bullet-proof metric they could leverage to explain funding decisions.

They found such a metric in the Impact Factor.

This metric was originally created to help librarians purchase journals. The Impact Factor measures how often a journal’s articles are cited. Grant committees consistently favor writers that publish in the most widely read journals. It is, therefore, the editorial judgment of these journals that shape our science.

And that might be OK if these editorial boards dispassionately published the best science. But they do not. Because it turns out they are in the attention economy business. Publishers' business is selling subscriptions to large institutions. And business is good!

50% of the $19B industry is controlled by five publishing companies. Those companies are profit-machines. The research they publish is funded by government grants. The peer review process is handled by volunteers paid by universities. Those same universities then pay huge amounts to license back their own research to read. No wonder the typical scientific publisher has a profit margin greater than Apple's.

But, it’s not their economics that’s the most corrosive thing about publishers. It’s the influence they have on science.

Novel ideas are less likely to be published in the more conservative, heavily-cited journals. This is the case even though these papers, when published, generate a disproportionately high impact. The relatively low publishing of novel work is a problem because publishing metrics determine fundability. This, in turn, means that researchers pursuing breakthrough ideas are less likely to be funded. Two separate studies have shown this to be true both in the EU and in Switzerland. (https://www.science.org/content/article/funding-agency-s-reviewers-were-biased-against-scientists-novel-ideas)

Instead, publishers like to publish pieces from well-known scientists that reaffirm field biases. They are tethered to incrementalism. Science, thus, is subject to the same sets of media incentives that warp our news ecosystem. We publish only the most shocking results that somehow confirm our existing view of the world.

No wonder progress is slowing down.

What Would a Better World Look Like

Our knowledge-production system was not designed to work this way. It was not designed at all. It has evolved in fits-and-starts. It is a testament to the perils of design by local optimization.

Those who want to escape its limitations can choose to either reform the system or try to replace it.

The Reform Approach

Revolution is costly. The existing system, for all its flaws, has major advantages. It has a scaffolding of financial support. It has the sheen of institutional legitimacy. It also has the accumulated optimizations of fifty years worth of reforms. So it’s not clear that shedding it is the best course. For those more inclined to incrementalism, there are viable paths forward. Let's look at three:

Playing Word Games. Sometimes it can be liberating to change the vocabulary of a field. Words are symbols of concepts. As the idea becomes more developed, a single word takes on conceptual baggage. Consider “genetics.” Technically, “genetics” is the study of genes, the small proteins that code our DNA. But the word became deeply tied to the related concept of heredity. When we believed that genes were only determined by our parents, we could use "genetics" synonymously with heredity. To study genes was to study heritability. But when scientists discovered that genes can be shaped by our environment, as well, the tight coupling with heredity was limiting. They had to invent new language to describe it even though they were still studying genes. Today “epigenetics” – the study of how the environment shapes gene expression – is a massive and fast-moving field. But to get the space to run, it had to free itself of the epistemic baggage of 150 years of evolutionary science.

Niche. Another approach is to identify a particular niche of research that can be incubated outside of mainstream science. This usually requires sheltering by a respected researcher. Take Integrated Information Theory. IIT is a leading theory for how “consciousness” or subjective experience emerges in living things. But Giulio Tononi – and his merry-band of researches at UW-Madison – could not have started by studying consciousness. Instead, Tononi began his career as a leading authority on sleep research. Only after establishing his bona fides was he able to transition his focus. “Consciousness” studies have been derided as pseudoscience by mainstream neuroscientists for a generation. But Tononi’s reputation and institutional backing allowed him to build a lab in a new niche. The lack of an established canon in consciousness has enabled his group to theorize and test paradigm shifting ideas.

Technical Shifts. Finally, the development of new technology can also enable critical-moments where a field can be shoved in a new direction. The JWT promises potential revolutions in astrophysics. CERN’s LHC has forced shifts in the dominant standard model of particle physics. Large computing models enable the study of complexity in the life and social sciences. Of course, paradigm shifting technologies are not exactly easy to develop.

The Revolutionary Approach

Reform has its merits. But if we could start over from scratch, if we could exit our existing system, how would we build a new research infrastructure?

An exit would require us to change the key institutions of science. We will need to shift both how we fund and disseminate science. But before we can do that, we have to start by agreeing on a goal for science. That's surprisingly difficult. But I’ll put a stake in the ground. The goal of science is to advance humanity’s demonstrably not-wrong understanding of the world.

For any given hypothesis, our goal is to use the scientific method to converge on a not-wrong solution. If, before an experiment, we were 10% confident the world is round and now we are 99% confident, then science has advanced our understanding. Thus, we can measure the value of our research by how much it increases our collective confidence in a hypothesis.

So how could we fund science that achieves these goals?

There are two novel mechanisms that I’ve been exploring.

The first is community-based funding. In this model, a community or an institution sets goals for researchers and directly funds research related to their goals. Of course, this has been happening forever in corporate R&D labs. Freedom from grant-writing enables them to move faster in targeted directions.

Today, networks may be able to replicate this model. DAOs enable networks to pool resources to crowdfund research initiatives. As a result, we’re seeing some promising new models emerge.

VibeBio, for example, launched earlier this year. They are a DAO of patients, investors and scientists that work together to identify motivated, but under-served patient populations to fund and participate in research.

Similar communities are forming to fund initiatives in climate science and to test fundamental theories.

The second novel funding mechanism involves developing an old-favorite concept of the crypto-community: the prediction market.

As Robin Hanson, an economics professor at George Mason, explains:

“Imagine a betting pool or market on most disputed science questions, with the going odds available to the popular media, and treated socially as the current academic consensus. Imagine that academics are expected to "put up or shut up" and accompany claims with at least token bets, and that statistics are collected on how well people do.

This would be an "idea futures" market, which I offer as an alternative to existing academic social institutions. Somewhat like a corn futures market, where one can bet on the future price of corn, here one bets on the future settlement of a present scientific controversy.”

Our global goal, then, would be to move these betting markets toward decisive and rapid resolution. Our scientists would have a reputational and financial stake in achieving this, too.

Both of these models would de-emphasize the traditional, LaTeX PDF article. Instead, they would focus on either a specific outcome or market-moving information. In both cases, the incentives of the researcher would shift from “construct an argument” to “share data that builds momentum.”

And that could point the way to a faster, more vibrant research ecosystem that looks less like Nature and more like ArXiv.

Founded in 1991 at Cornell, ArXiv provides a free repository of pre-print scientific papers. The site continues to grow in popularity. Its role in the daily habits of scientists cannot be overstated.

To quote a recent Atlantic article: “During recent library renovations, a survey quizzed CERN staff about what they wanted: New furniture? Better coffee? 'What they said is ‘Put a big screen in there and write a script that automatically displays the new daily submissions to ArXiv,’'Kohls says. 'It will probably be the center of the CERN Library.'”

The excitement around ArXiv makes sense. If Twitter is catnip for reporters, ArXiv could play a similar role for research. It allows them to observe and comment on work-in-progress. In doing so, it accelerates the pace of change.

But ArXiv remains a skeuomorphic technology. Despite being of the internet, it still uses the metaphor of a journal article. There’s no reason for that.

A research community that operates online rather than in print can work with richer media types. The DeSci Foundation is leading a charge here. They have created Dynamic Research Objects. These constructs share more in common with a Git repository than a journal. They "combine text, code, data, videos, peer-reviews, annotations, and commentaries" that enables auditing and replication. They also provide an immutable record of who contributed different insights to a project. This, of course, provides a more robust accounting of credit than being listed first on an article.

In the interplay of these ideas -- new funding mechanics and richer, more rapid knowledge sharing -- we can see the potential of restarting the engine of progress. Our system would scarcely have been imaginable to those intrepid souls who formed the Royal Society in 1660s London.

That, of course, is exactly the point.